Journal Description

Entropy

Entropy

is an international and interdisciplinary peer-reviewed open access journal of entropy and information studies, published monthly online by MDPI. The International Society for the Study of Information (IS4SI) and Spanish Society of Biomedical Engineering (SEIB) are affiliated with Entropy and their members receive a discount on the article processing charge.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, SCIE (Web of Science), Inspec, PubMed, PMC, Astrophysics Data System, and other databases.

- Journal Rank: JCR - Q2 (Physics, Multidisciplinary) / CiteScore - Q1 (Mathematical Physics)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 20.8 days after submission; acceptance to publication is undertaken in 2.9 days (median values for papers published in this journal in the second half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

- Testimonials: See what our editors and authors say about Entropy.

- Companion journals for Entropy include: Foundations, Thermo and MAKE.

Impact Factor:

2.7 (2022);

5-Year Impact Factor:

2.6 (2022)

Latest Articles

Learning in Deep Radial Basis Function Networks

Entropy 2024, 26(5), 368; https://doi.org/10.3390/e26050368 (registering DOI) - 26 Apr 2024

Abstract

Learning in neural networks with locally-tuned neuron models such as radial Basis Function (RBF) networks is often seen as instable, in particular when multi-layered architectures are used. Furthermore, universal approximation theorems for single-layered RBF networks are very well established; therefore, deeper architectures are

[...] Read more.

Learning in neural networks with locally-tuned neuron models such as radial Basis Function (RBF) networks is often seen as instable, in particular when multi-layered architectures are used. Furthermore, universal approximation theorems for single-layered RBF networks are very well established; therefore, deeper architectures are theoretically not required. Consequently, RBFs are mostly used in a single-layered manner. However, deep neural networks have proven their effectiveness on many different tasks. In this paper, we show that deeper RBF architectures with multiple radial basis function layers can be designed together with efficient learning schemes. We introduce an initialization scheme for deep RBF networks based on k-means clustering and covariance estimation. We further show how to make use of convolutions to speed up the calculation of the Mahalanobis distance in a partially connected way, which is similar to the convolutional neural networks (CNNs). Finally, we evaluate our approach on image classification as well as speech emotion recognition tasks. Our results show that deep RBF networks perform very well, with comparable results to other deep neural network types, such as CNNs.

Full article

Open AccessFeature PaperArticle

Canonical vs. Grand Canonical Ensemble for Bosonic Gases under Harmonic Confinement

by

Andrea Crisanti, Luca Salasnich, Alessandro Sarracino and Marco Zannetti

Entropy 2024, 26(5), 367; https://doi.org/10.3390/e26050367 (registering DOI) - 26 Apr 2024

Abstract

We analyze the general relation between canonical and grand canonical ensembles in the thermodynamic limit. We begin our discussion by deriving, with an alternative approach, some standard results first obtained by Kac and coworkers in the late 1970s. Then, motivated by the Bose–Einstein

[...] Read more.

We analyze the general relation between canonical and grand canonical ensembles in the thermodynamic limit. We begin our discussion by deriving, with an alternative approach, some standard results first obtained by Kac and coworkers in the late 1970s. Then, motivated by the Bose–Einstein condensation (BEC) of trapped gases with a fixed number of atoms, which is well described by the canonical ensemble and by the recent groundbreaking experimental realization of BEC with photons in a dye-filled optical microcavity under genuine grand canonical conditions, we apply our formalism to a system of non-interacting Bose particles confined in a two-dimensional harmonic trap. We discuss in detail the mathematical origin of the inequivalence of ensembles observed in the condensed phase, giving place to the so-called grand canonical catastrophe of density fluctuations. We also provide explicit analytical expressions for the internal energy and specific heat and compare them with available experimental data. For these quantities, we show the equivalence of ensembles in the thermodynamic limit.

Full article

(This article belongs to the Special Issue Matter-Aggregating Systems at a Classical vs. Quantum Interface)

Open AccessPerspective

Quo Vadis Particula Physica?

by

Xavier Calmet

Entropy 2024, 26(5), 366; https://doi.org/10.3390/e26050366 - 26 Apr 2024

Abstract

In this brief paper, I give a very personal account on the state of particle physics on the occasion of Paul Frampton’s 80th birthday.

Full article

(This article belongs to the Special Issue Particle Theory and Theoretical Cosmology—Dedicated to Professor Paul Howard Frampton on the Occasion of His 80th Birthday)

Open AccessArticle

Impact of Normalization on Entropy-Based Weights in Hellwig’s Method: A Case Study on Evaluating Sustainable Development in the Education Area

by

Ewa Roszkowska and Tomasz Wachowicz

Entropy 2024, 26(5), 365; https://doi.org/10.3390/e26050365 - 26 Apr 2024

Abstract

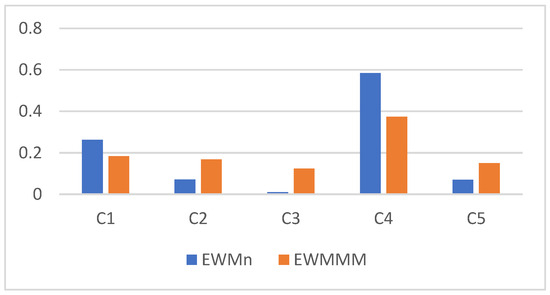

Determining criteria weights plays a crucial role in multi-criteria decision analyses. Entropy is a significant measure in information science, and several multi-criteria decision-making methods utilize the entropy weight method (EWM). In the literature, two approaches for determining the entropy weight method can be

[...] Read more.

Determining criteria weights plays a crucial role in multi-criteria decision analyses. Entropy is a significant measure in information science, and several multi-criteria decision-making methods utilize the entropy weight method (EWM). In the literature, two approaches for determining the entropy weight method can be found. One involves normalization before calculating the entropy values, while the second does not. This paper investigates the normalization effect for entropy-based weights and Hellwig’s method. To compare the influence of various normalization methods in both the EWM and Hellwig’s method, a study evaluating the sustainable development of EU countries in the education area in the year 2021 was analyzed. The study used data from Eurostat related to European countries’ realization of the SDG 4 goal. It is observed that vector normalization and sum normalization did not change the entropy-based weights. In the case study, the max–min normalization influenced EWM weights. At the same time, these weights had only a very weak impact on the final rankings of countries with respect to achieving the SDG 4 goal, as determined by Hellwig’s method. The results are compared with the outcome obtained by Hellwig’s method with equal weights. The simulation study was conducted by modifying Eurostat data to investigate how the different normalization relationships discovered among the criteria affect entropy-based weights and Hellwig’s method results.

Full article

(This article belongs to the Section Multidisciplinary Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

Minimizing Entropy and Complexity in Creative Production from Emergent Pragmatics to Action Semantics

by

Stephen Fox

Entropy 2024, 26(5), 364; https://doi.org/10.3390/e26050364 - 26 Apr 2024

Abstract

New insights into intractable industrial challenges can be revealed by framing them in terms of natural science. One intractable industrial challenge is that creative production can be much more financially expensive and time consuming than standardized production. Creative products include a wide range

[...] Read more.

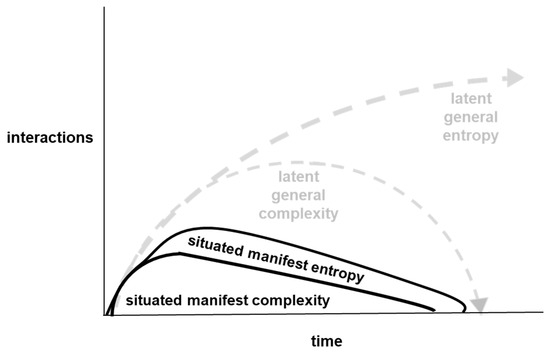

New insights into intractable industrial challenges can be revealed by framing them in terms of natural science. One intractable industrial challenge is that creative production can be much more financially expensive and time consuming than standardized production. Creative products include a wide range of goods that have one or more original characteristics. The scaling up of creative production is hindered by high financial production costs and long production durations. In this paper, creative production is framed in terms of interactions between entropy and complexity during progressions from emergent pragmatics to action semantics. An analysis of interactions between entropy and complexity is provided that relates established practice in creative production to organizational survival in changing environments. The analysis in this paper is related to assembly theory, which is a recent theoretical development in natural science that addresses how open-ended generation of complex physical objects can emerge from selection in biology. Parallels between assembly practice in industrial production and assembly theory in natural science are explained through constructs that are common to both, such as assembly index. Overall, analyses reported in the paper reveal that interactions between entropy and complexity underlie intractable challenges in creative production, from the production of individual products to the survival of companies.

Full article

(This article belongs to the Special Issue Entropy and Organization in Natural and Social Systems II)

►▼

Show Figures

Figure 1

Open AccessArticle

An Entropic Analysis of Social Demonstrations

by

Daniel Rico and Yérali Gandica

Entropy 2024, 26(5), 363; https://doi.org/10.3390/e26050363 - 25 Apr 2024

Abstract

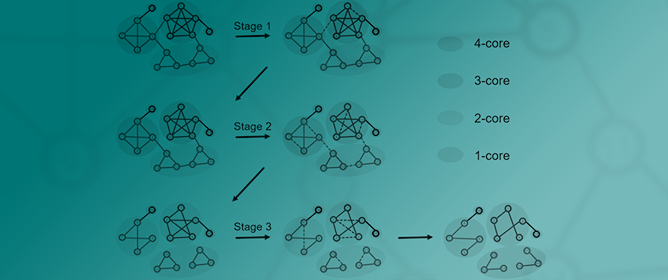

Social media has dramatically influenced how individuals and groups express their demands, concerns, and aspirations during social demonstrations. The study of X or Twitter hashtags during those events has revealed the presence of some temporal points characterised by high correlation among their participants.

[...] Read more.

Social media has dramatically influenced how individuals and groups express their demands, concerns, and aspirations during social demonstrations. The study of X or Twitter hashtags during those events has revealed the presence of some temporal points characterised by high correlation among their participants. It has also been reported that the connectivity presents a modular-to-nested transition at the point of maximum correlation. The present study aims to determine whether it is possible to characterise this transition using entropic-based tools. Our results show that entropic analysis can effectively find the transition point to the nested structure, allowing researchers to know that the transition occurs without the need for a network representation. The entropic analysis also shows that the modular-to-nested transition is characterised not by the diversity in the number of hashtags users post but by how many hashtags they share.

Full article

(This article belongs to the Special Issue Complex Systems Approach to Social Dynamics)

Open AccessArticle

Stochastic Compartment Model with Mortality and Its Application to Epidemic Spreading in Complex Networks

by

Téo Granger, Thomas M. Michelitsch, Michael Bestehorn, Alejandro P. Riascos and Bernard A. Collet

Entropy 2024, 26(5), 362; https://doi.org/10.3390/e26050362 - 25 Apr 2024

Abstract

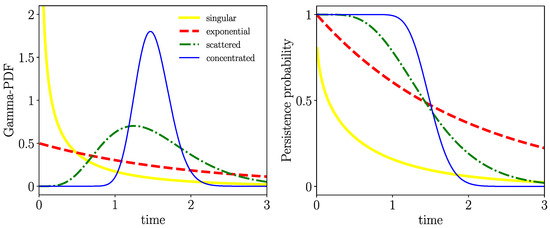

We study epidemic spreading in complex networks by a multiple random walker approach. Each walker performs an independent simple Markovian random walk on a complex undirected (ergodic) random graph where we focus on the Barabási–Albert (BA), Erdös–Rényi (ER), and Watts–Strogatz (WS) types. Both

[...] Read more.

We study epidemic spreading in complex networks by a multiple random walker approach. Each walker performs an independent simple Markovian random walk on a complex undirected (ergodic) random graph where we focus on the Barabási–Albert (BA), Erdös–Rényi (ER), and Watts–Strogatz (WS) types. Both walkers and nodes can be either susceptible (S) or infected and infectious (I), representing their state of health. Susceptible nodes may be infected by visits of infected walkers, and susceptible walkers may be infected by visiting infected nodes. No direct transmission of the disease among walkers (or among nodes) is possible. This model mimics a large class of diseases such as Dengue and Malaria with the transmission of the disease via vectors (mosquitoes). Infected walkers may die during the time span of their infection, introducing an additional compartment D of dead walkers. Contrary to the walkers, there is no mortality of infected nodes. Infected nodes always recover from their infection after a random finite time span. This assumption is based on the observation that infectious vectors (mosquitoes) are not ill and do not die from the infection. The infectious time spans of nodes and walkers, and the survival times of infected walkers, are represented by independent random variables. We derive stochastic evolution equations for the mean-field compartmental populations with the mortality of walkers and delayed transitions among the compartments. From linear stability analysis, we derive the basic reproduction numbers

(This article belongs to the Special Issue Random Walks and Stochastic Processes in Complex Systems: From Physics to Socio-Economic Phenomena)

►▼

Show Figures

Figure 1

Open AccessArticle

Sensorless Speed Estimation of Induction Motors through Signal Analysis Based on Chaos Using Density of Maxima

by

Marlio Antonio Silva, Jose Anselmo Lucena-Junior, Julio Cesar da Silva, Francisco Antonio Belo, Abel Cavalcante Lima-Filho, Jorge Gabriel Gomes de Souza Ramos, Romulo Camara and Alisson Brito

Entropy 2024, 26(5), 361; https://doi.org/10.3390/e26050361 - 25 Apr 2024

Abstract

Three-phase induction motors are widely used in various industrial sectors and are responsible for a significant portion of the total electrical energy consumed. To ensure their efficient operation, it is necessary to apply control systems with specific algorithms able to estimate rotation speed

[...] Read more.

Three-phase induction motors are widely used in various industrial sectors and are responsible for a significant portion of the total electrical energy consumed. To ensure their efficient operation, it is necessary to apply control systems with specific algorithms able to estimate rotation speed accurately and with an adequate response time. However, the angular speed sensors used in induction motors are generally expensive and unreliable, and they may be unsuitable for use in hostile environments. This paper presents an algorithm for speed estimation in three-phase induction motors using the chaotic variable of maximum density. The technique used in this work analyzes the current signals from the motor power supply without invasive sensors on its structure. The results show that speed estimation is achieved with a response time lower than that obtained by classical techniques based on the Fourier Transform. This technique allows for the provision of motor shaft speed values when operated under variable load.

Full article

(This article belongs to the Special Issue Applications of Chaos Theory to Complex Systems Analysis in Engineering)

►▼

Show Figures

Figure 1

Open AccessArticle

Elicitation of Rank Correlations with Probabilities of Concordance: Method and Application to Building Management

by

Benjamin Ramousse, Miguel Angel Mendoza-Lugo, Guus Rongen and Oswaldo Morales-Nápoles

Entropy 2024, 26(5), 360; https://doi.org/10.3390/e26050360 - 25 Apr 2024

Abstract

Constructing Bayesian networks (BN) for practical applications presents significant challenges, especially in domains with limited empirical data available. In such situations, field experts are often consulted to estimate the model’s parameters, for instance, rank correlations in Gaussian copula-based Bayesian networks (GCBN). Because there

[...] Read more.

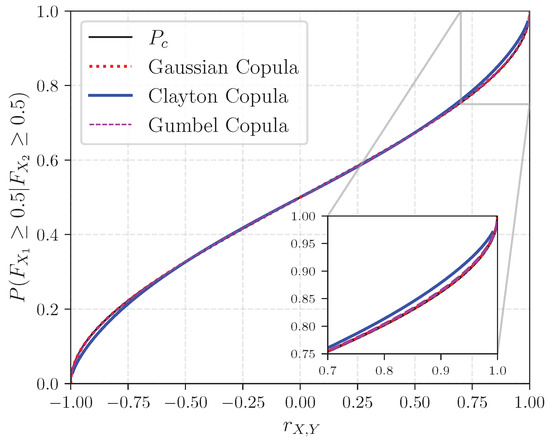

Constructing Bayesian networks (BN) for practical applications presents significant challenges, especially in domains with limited empirical data available. In such situations, field experts are often consulted to estimate the model’s parameters, for instance, rank correlations in Gaussian copula-based Bayesian networks (GCBN). Because there is no consensus on a ‘best’ approach for eliciting these correlations, this paper proposes a framework that uses probabilities of concordance for assessing dependence, and the dependence calibration score to aggregate experts’ judgments. To demonstrate the relevance of our approach, the latter is implemented to populate a GCBN intended to estimate the condition of air handling units’ components—a key challenge in building asset management. While the elicitation of concordance probabilities was well received by the questionnaire respondents, the analysis of the results reveals notable disparities in the experts’ ability to quantify uncertainty. Moreover, the application of the dependence calibration aggregation method was hindered by the absence of relevant seed variables, thus failing to evaluate the participants’ field expertise. All in all, while the authors do not recommend to use the current model in practice, this study suggests that concordance probabilities should be further explored as an alternative approach for the elicitation of dependence.

Full article

(This article belongs to the Special Issue Bayesian Network Modelling in Data Sparse Environments)

►▼

Show Figures

Figure 1

Open AccessArticle

Computational Issues of Quantum Heat Engines with Non-Harmonic Working Medium

by

Andrea R. Insinga, Bjarne Andresen and Peter Salamon

Entropy 2024, 26(5), 359; https://doi.org/10.3390/e26050359 - 25 Apr 2024

Abstract

►▼

Show Figures

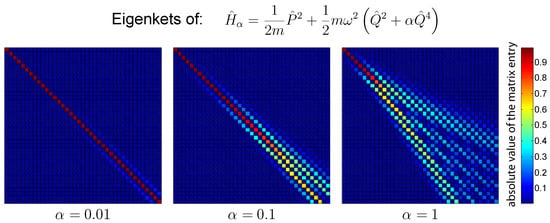

In this work, we lay the foundations for computing the behavior of a quantum heat engine whose working medium consists of an ensemble of non-harmonic quantum oscillators. In order to enable this analysis, we develop a method based on the Schrödinger picture. We

[...] Read more.

In this work, we lay the foundations for computing the behavior of a quantum heat engine whose working medium consists of an ensemble of non-harmonic quantum oscillators. In order to enable this analysis, we develop a method based on the Schrödinger picture. We investigate different possible choices on the basis of expanding the density operator, as it is crucial to select a basis that will expedite the numerical integration of the time-evolution equation without compromising the accuracy of the computed results. For this purpose, we developed an estimation technique that allows us to quantify the error that is unavoidably introduced when time-evolving the density matrix expansion over a finite-dimensional basis. Using this and other ways of evaluating a specific choice of basis, we arrive at the conclusion that the basis of eigenstates of a harmonic Hamiltonian leads to the best computational performance. Additionally, we present a method to quantify and reduce the error that is introduced when extracting relevant physical information about the ensemble of oscillators. The techniques presented here are specific to quantum heat cycles; the coexistence within a cycle of time-dependent Hamiltonian and coupling with a thermal reservoir are particularly complex to handle for the non-harmonic case. The present investigation is paving the way for numerical analysis of non-harmonic quantum heat machines.

Full article

Figure 1

Open AccessArticle

Crude Oil Prices Forecast Based on Mixed-Frequency Deep Learning Approach and Intelligent Optimization Algorithm

by

Wanbo Lu and Zhaojie Huang

Entropy 2024, 26(5), 358; https://doi.org/10.3390/e26050358 - 24 Apr 2024

Abstract

Precisely forecasting the price of crude oil is challenging due to its fundamental properties of nonlinearity, volatility, and stochasticity. This paper introduces a novel hybrid model, namely, the KV-MFSCBA-G model, within the decomposition–integration paradigm. It combines the mixed-frequency convolutional neural network–bidirectional long short-term

[...] Read more.

Precisely forecasting the price of crude oil is challenging due to its fundamental properties of nonlinearity, volatility, and stochasticity. This paper introduces a novel hybrid model, namely, the KV-MFSCBA-G model, within the decomposition–integration paradigm. It combines the mixed-frequency convolutional neural network–bidirectional long short-term memory network-attention mechanism (MFCBA) and generalized autoregressive conditional heteroskedasticity (GARCH) models. The MFCBA and GARCH models are employed to respectively forecast the low-frequency and high-frequency components decomposed through variational mode decomposition optimized by Kullback–Leibler divergence (KL-VMD). The classification of these components is performed using the fuzzy entropy (FE) algorithm. Therefore, this model can fully exploit the advantages of deep learning networks in fitting nonlinearities and traditional econometric models in capturing volatilities. Furthermore, the intelligent optimization algorithm and the low-frequency economic variable are introduced to improve forecasting performance. Specifically, the sparrow search algorithm (SSA) is employed to determine the optimal parameter combination of the MFCBA model, which is incorporated with monthly global economic conditions (GECON) data. The empirical findings of West Texas Intermediate (WTI) and Brent crude oil indicate that the proposed approach outperforms other models in evaluation indicators and statistical tests and has good robustness. This model can assist investors and market regulators in making decisions.

Full article

(This article belongs to the Section Multidisciplinary Applications)

Open AccessReview

Unveiling the Future of Human and Machine Coding: A Survey of End-to-End Learned Image Compression

by

Chen-Hsiu Huang and Ja-Ling Wu

Entropy 2024, 26(5), 357; https://doi.org/10.3390/e26050357 - 24 Apr 2024

Abstract

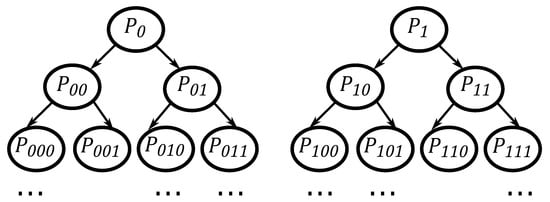

End-to-end learned image compression codecs have notably emerged in recent years. These codecs have demonstrated superiority over conventional methods, showcasing remarkable flexibility and adaptability across diverse data domains while supporting new distortion losses. Despite challenges such as computational complexity, learned image compression methods

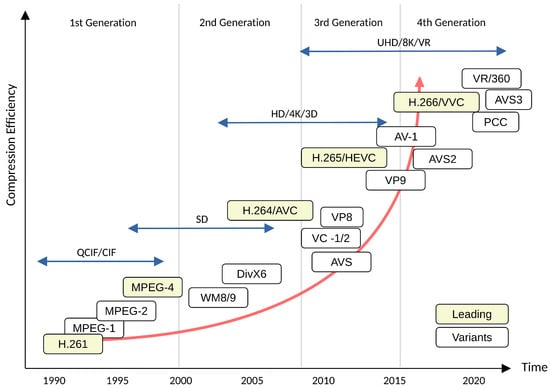

[...] Read more.

End-to-end learned image compression codecs have notably emerged in recent years. These codecs have demonstrated superiority over conventional methods, showcasing remarkable flexibility and adaptability across diverse data domains while supporting new distortion losses. Despite challenges such as computational complexity, learned image compression methods inherently align with learning-based data processing and analytic pipelines due to their well-suited internal representations. The concept of Video Coding for Machines has garnered significant attention from both academic researchers and industry practitioners. This concept reflects the growing need to integrate data compression with computer vision applications. In light of these developments, we present a comprehensive survey and review of lossy image compression methods. Additionally, we provide a concise overview of two prominent international standards, MPEG Video Coding for Machines and JPEG AI. These standards are designed to bridge the gap between data compression and computer vision, catering to practical industry use cases.

Full article

(This article belongs to the Special Issue Information Theory and Coding for Image/Video Processing)

►▼

Show Figures

Figure 1

Open AccessArticle

Computation of the Spatial Distribution of Charge-Carrier Density in Disordered Media

by

Alexey V. Nenashev, Florian Gebhard, Klaus Meerholz and Sergei D. Baranovskii

Entropy 2024, 26(5), 356; https://doi.org/10.3390/e26050356 - 24 Apr 2024

Abstract

The space- and temperature-dependent electron distribution

The space- and temperature-dependent electron distribution

(This article belongs to the Special Issue Recent Advances in the Theory of Disordered Systems)

►▼

Show Figures

Graphical abstract

Open AccessReview

Flavor’s Delight

by

Hans Peter Nilles and Saúl Ramos-Sánchez

Entropy 2024, 26(5), 355; https://doi.org/10.3390/e26050355 - 24 Apr 2024

Abstract

Discrete flavor symmetries provide a promising approach to understand the flavor sector of the standard model of particle physics. Top-down (TD) explanations from string theory reveal two different types of such flavor symmetries: traditional and modular flavor symmetries that combine to the eclectic

[...] Read more.

Discrete flavor symmetries provide a promising approach to understand the flavor sector of the standard model of particle physics. Top-down (TD) explanations from string theory reveal two different types of such flavor symmetries: traditional and modular flavor symmetries that combine to the eclectic flavor group. There have been many bottom-up (BU) constructions to fit experimental data within this scheme. We compare TD and BU constructions to identify the most promising groups and try to give a unified description. Although there is some progress in joining BU and TD approaches, we point out some gaps that have to be closed with future model building.

Full article

(This article belongs to the Special Issue Particle Theory and Theoretical Cosmology—Dedicated to Professor Paul Howard Frampton on the Occasion of His 80th Birthday)

►▼

Show Figures

Figure 1

Open AccessArticle

Implications of Minimum Description Length for Adversarial Attack in Natural Language Processing

by

Kshitiz Tiwari and Lu Zhang

Entropy 2024, 26(5), 354; https://doi.org/10.3390/e26050354 - 24 Apr 2024

Abstract

Investigating causality to establish novel criteria for training robust natural language processing (NLP) models is an active research area. However, current methods face various challenges such as the difficulties in identifying keyword lexicons and obtaining data from multiple labeled environments. In this paper,

[...] Read more.

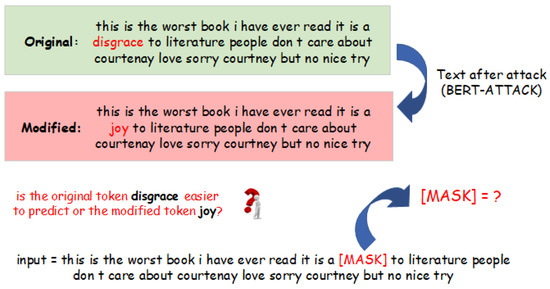

Investigating causality to establish novel criteria for training robust natural language processing (NLP) models is an active research area. However, current methods face various challenges such as the difficulties in identifying keyword lexicons and obtaining data from multiple labeled environments. In this paper, we study the problem of robust NLP from a complementary but different angle: we treat the behavior of an attack model as a complex causal mechanism and quantify its algorithmic information using the minimum description length (MDL) framework. Specifically, we use masked language modeling (MLM) to measure the “amount of effort” needed to transform from the original text to the altered text. Based on that, we develop techniques for judging whether a specified set of tokens has been altered by the attack, even in the absence of the original text data.

Full article

(This article belongs to the Section Information Theory, Probability and Statistics)

►▼

Show Figures

Figure 1

Open AccessArticle

LF3PFL: A Practical Privacy-Preserving Federated Learning Algorithm Based on Local Federalization Scheme

by

Yong Li, Gaochao Xu, Xutao Meng, Wei Du and Xianglin Ren

Entropy 2024, 26(5), 353; https://doi.org/10.3390/e26050353 - 23 Apr 2024

Abstract

In the realm of federated learning (FL), the exchange of model data may inadvertently expose sensitive information of participants, leading to significant privacy concerns. Existing FL privacy-preserving techniques, such as differential privacy (DP) and secure multi-party computing (SMC), though offering viable solutions, face

[...] Read more.

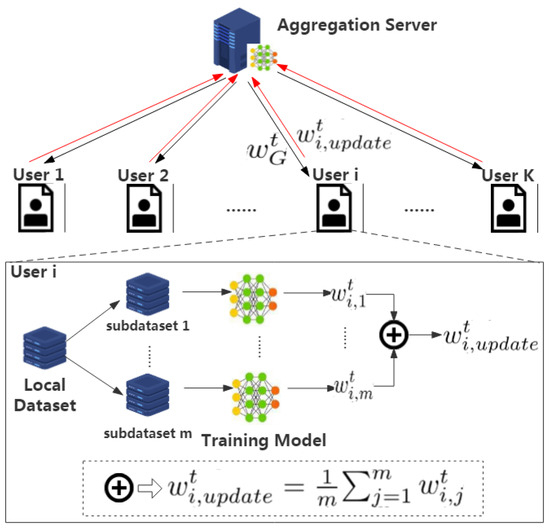

In the realm of federated learning (FL), the exchange of model data may inadvertently expose sensitive information of participants, leading to significant privacy concerns. Existing FL privacy-preserving techniques, such as differential privacy (DP) and secure multi-party computing (SMC), though offering viable solutions, face practical challenges including reduced performance and complex implementations. To overcome these hurdles, we propose a novel and pragmatic approach to privacy preservation in FL by employing localized federated updates (LF3PFL) aimed at enhancing the protection of participant data. Furthermore, this research refines the approach by incorporating cross-entropy optimization, carefully fine-tuning measurement, and improving information loss during the model training phase to enhance both model efficacy and data confidentiality. Our approach is theoretically supported and empirically validated through extensive simulations on three public datasets: CIFAR-10, Shakespeare, and MNIST. We evaluate its effectiveness by comparing training accuracy and privacy protection against state-of-the-art techniques. Our experiments, which involve five distinct local models (Simple-CNN, ModerateCNN, Lenet, VGG9, and Resnet18), provide a comprehensive assessment across a variety of scenarios. The results clearly demonstrate that LF3PFL not only maintains competitive training accuracies but also significantly improves privacy preservation, surpassing existing methods in practical applications. This balance between privacy and performance underscores the potential of localized federated updates as a key component in future FL privacy strategies, offering a scalable and effective solution to one of the most pressing challenges in FL.

Full article

(This article belongs to the Special Issue Information Security and Data Privacy)

►▼

Show Figures

Figure 1

Open AccessArticle

DiffFSRE: Diffusion-Enhanced Prototypical Network for Few-Shot Relation Extraction

by

Yang Chen and Bowen Shi

Entropy 2024, 26(5), 352; https://doi.org/10.3390/e26050352 - 23 Apr 2024

Abstract

Supervised learning methods excel in traditional relation extraction tasks. However, the quality and scale of the training data heavily influence their performance. Few-shot relation extraction is gradually becoming a research hotspot whose objective is to learn and extract semantic relationships between entities with

[...] Read more.

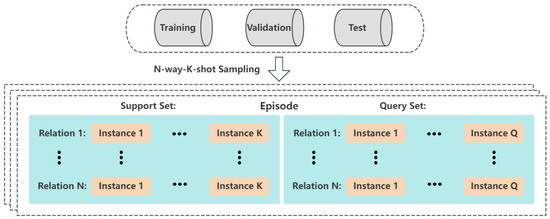

Supervised learning methods excel in traditional relation extraction tasks. However, the quality and scale of the training data heavily influence their performance. Few-shot relation extraction is gradually becoming a research hotspot whose objective is to learn and extract semantic relationships between entities with only a limited number of annotated samples. In recent years, numerous studies have employed prototypical networks for few-shot relation extraction. However, these methods often suffer from overfitting of the relation classes, making it challenging to generalize effectively to new relationships. Therefore, this paper seeks to utilize a diffusion model for data augmentation to address the overfitting issue of prototypical networks. We propose a diffusion model-enhanced prototypical network framework. Specifically, we design and train a controllable conditional relation generation diffusion model on the relation extraction dataset, which can generate the corresponding instance representation according to the relation description. Building upon the trained diffusion model, we further present a pseudo-sample-enhanced prototypical network, which is able to provide more accurate representations for prototype classes, thereby alleviating overfitting and better generalizing to unseen relation classes. Additionally, we introduce a pseudo-sample-aware attention mechanism to enhance the model’s adaptability to pseudo-sample data through a cross-entropy loss, further improving the model’s performance. A series of experiments are conducted to prove our method’s effectiveness. The results indicate that our proposed approach significantly outperforms existing methods, particularly in low-resource one-shot environments. Further ablation analyses underscore the necessity of each module in the model. As far as we know, this is the first research to employ a diffusion model for enhancing the prototypical network through data augmentation in few-shot relation extraction.

Full article

(This article belongs to the Special Issue Natural Language Processing and Data Mining)

►▼

Show Figures

Figure 1

Open AccessArticle

An Efficient Image Cryptosystem Utilizing Difference Matrix and Genetic Algorithm

by

Honglian Shen and Xiuling Shan

Entropy 2024, 26(5), 351; https://doi.org/10.3390/e26050351 - 23 Apr 2024

Abstract

Aiming at addressing the security and efficiency challenges during image transmission, an efficient image cryptosystem utilizing difference matrix and genetic algorithm is proposed in this paper. A difference matrix is a typical combinatorial structure that exhibits properties of discretization and approximate uniformity. It

[...] Read more.

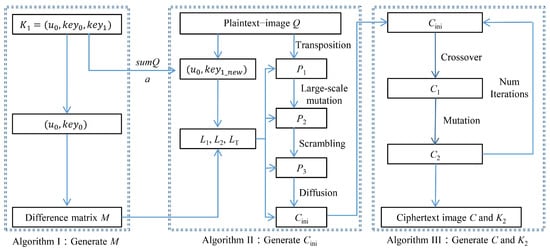

Aiming at addressing the security and efficiency challenges during image transmission, an efficient image cryptosystem utilizing difference matrix and genetic algorithm is proposed in this paper. A difference matrix is a typical combinatorial structure that exhibits properties of discretization and approximate uniformity. It can serve as a pseudo-random sequence, offering various scrambling techniques while occupying a small storage space. The genetic algorithm generates multiple ciphertext images with strong randomness through local crossover and mutation operations, then obtains high-quality ciphertext images through multiple iterations using the optimal preservation strategy. The whole encryption process is divided into three stages: first, the difference matrix is generated; second, it is utilized for initial encryption to ensure that the resulting ciphertext image has relatively good initial randomness; finally, multiple rounds of local genetic operations are used to optimize the output. The proposed cryptosystem is demonstrated to be effective and robust through simulation experiments and statistical analyses, highlighting its superiority over other existing algorithms.

Full article

(This article belongs to the Topic AI and Computational Methods for Modelling, Simulations and Optimizing of Advanced Systems: Innovations in Complexity)

►▼

Show Figures

Figure 1

Open AccessArticle

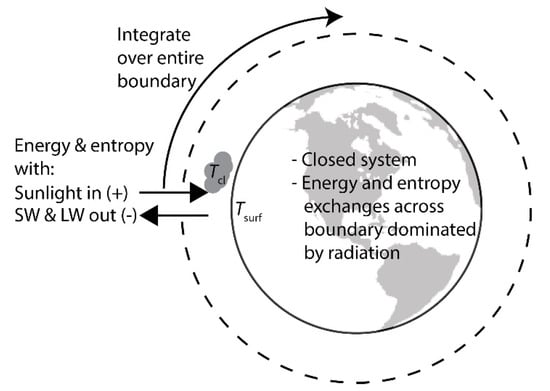

Planetary Energy Flow and Entropy Production Rate by Earth from 2002 to 2023

by

Elijah Thimsen

Entropy 2024, 26(5), 350; https://doi.org/10.3390/e26050350 - 23 Apr 2024

Abstract

In this work, satellite data from the Clouds and Earth’s Radiant Energy System (CERES) and Moderate Resolution Imaging Spectroradiometer (MODIS) instruments are analyzed to determine how the global absorbed sunlight and global entropy production rates have changed from 2002 to 2023. The data

[...] Read more.

In this work, satellite data from the Clouds and Earth’s Radiant Energy System (CERES) and Moderate Resolution Imaging Spectroradiometer (MODIS) instruments are analyzed to determine how the global absorbed sunlight and global entropy production rates have changed from 2002 to 2023. The data is used to test hypotheses derived from the Maximum Power Principle (MPP) and Maximum Entropy Production Principle (MEP) about the evolution of Earth’s surface and atmosphere. The results indicate that both the rate of absorbed sunlight and global entropy production have increased over the last 20 years, which is consistent with the predictions of both hypotheses. Given the acceptance of the MPP or MEP, some peripheral extensions and nuances are discussed.

Full article

(This article belongs to the Collection Disorder and Biological Physics)

►▼

Show Figures

Figure 1

Open AccessArticle

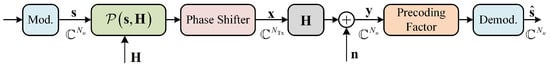

Efficient Constant Envelope Precoding for Massive MU-MIMO Downlink via Majorization-Minimization Method

by

Rui Liang, Hui Li, Yingli Dong and Guodong Xue

Entropy 2024, 26(4), 349; https://doi.org/10.3390/e26040349 - 21 Apr 2024

Abstract

The practical implementation of massive multi-user multi-input–multi-output (MU-MIMO) downlink communication systems power amplifiers that are energy efficient; otherwise, the power consumption of the base station (BS) will be prohibitive. Constant envelope (CE) precoding is gaining increasing interest for its capability to utilize low-cost,

[...] Read more.

The practical implementation of massive multi-user multi-input–multi-output (MU-MIMO) downlink communication systems power amplifiers that are energy efficient; otherwise, the power consumption of the base station (BS) will be prohibitive. Constant envelope (CE) precoding is gaining increasing interest for its capability to utilize low-cost, high-efficiency nonlinear radio frequency amplifiers. Our work focuses on the topic of CE precoding in massive MU-MIMO systems and presents an efficient CE precoding algorithm. This algorithm uses an alternating minimization (AltMin) framework to optimize the CE precoded signal and precoding factor, aiming to minimize the difference between the received signal and the transmit symbol. For the optimization of the CE precoded signal, we provide a powerful approach that integrates the majorization-minimization (MM) method and the fast iterative shrinkage-thresholding (FISTA) method. This algorithm combines the characteristics of the massive MU-MIMO channel with the second-order Taylor expansion to construct the surrogate function in the MM method, in which minimizing this surrogate function is the worst-case of the system. Specifically, we expand the suggested CE precoding algorithm to involve the discrete constant envelope (DCE) precoding case. In addition, we thoroughly examine the exact property, convergence, and computational complexity of the proposed algorithm. Simulation results demonstrate that the proposed CE precoding algorithm can achievean uncoded biterror rate (BER) performance gain of roughly

(This article belongs to the Special Issue Information Theory for MIMO Systems)

►▼

Show Figures

Figure 1

Journal Menu

► ▼ Journal Menu-

- Entropy Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Video Exhibition

- Instructions for Authors

- Special Issues

- Topics

- Sections & Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Society Collaborations

- Conferences

- Editorial Office

Journal Browser

► ▼ Journal Browser-

arrow_forward_ios

Forthcoming issue

arrow_forward_ios Current issue - Vol. 26 (2024)

- Vol. 25 (2023)

- Vol. 24 (2022)

- Vol. 23 (2021)

- Vol. 22 (2020)

- Vol. 21 (2019)

- Vol. 20 (2018)

- Vol. 19 (2017)

- Vol. 18 (2016)

- Vol. 17 (2015)

- Vol. 16 (2014)

- Vol. 15 (2013)

- Vol. 14 (2012)

- Vol. 13 (2011)

- Vol. 12 (2010)

- Vol. 11 (2009)

- Vol. 10 (2008)

- Vol. 9 (2007)

- Vol. 8 (2006)

- Vol. 7 (2005)

- Vol. 6 (2004)

- Vol. 5 (2003)

- Vol. 4 (2002)

- Vol. 3 (2001)

- Vol. 2 (2000)

- Vol. 1 (1999)

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Algorithms, Computation, Entropy, Fractal Fract, MCA

Analytical and Numerical Methods for Stochastic Biological Systems

Topic Editors: Mehmet Yavuz, Necati Ozdemir, Mouhcine Tilioua, Yassine SabbarDeadline: 10 May 2024

Topic in

Algorithms, Diagnostics, Entropy, Information, J. Imaging

Application of Machine Learning in Molecular Imaging

Topic Editors: Allegra Conti, Nicola Toschi, Marianna Inglese, Andrea Duggento, Matthew Grech-Sollars, Serena Monti, Giancarlo Sportelli, Pietro CarraDeadline: 31 May 2024

Topic in

Education Sciences, Entropy, JAL, Societies, Sustainability

Sustainability in Aging and Depopulation Societies

Topic Editors: Shiro Horiuchi, Gregor Wolbring, Takeshi MatsudaDeadline: 15 June 2024

Topic in

Buildings, Energies, Entropy, Resources, Sustainability

Advances in Solar Heating and Cooling

Topic Editors: Salvatore Vasta, Sotirios Karellas, Marina Bonomolo, Alessio Sapienza, Uli JakobDeadline: 30 June 2024

Conferences

22–26 November 2024

2024 International Conference on Science and Engineering of Electronics (ICSEE'2024) Wuhan, China

28–31 May 2024

XXII Conference on Non-equilibrium Statistical Mechanics and Nonlinear Physics—MEDYFINOL 2024

Special Issues

Special Issue in

Entropy

The Landauer Principle and Its Implementations in Physics, Chemistry and Biology: Current Status, Critics and Controversies

Guest Editor: Edward BormashenkoDeadline: 30 April 2024

Special Issue in

Entropy

Information Theory for Interpretable Machine Learning

Guest Editors: Marco Piangerelli, Sotiris KotsiantisDeadline: 15 May 2024

Special Issue in

Entropy

Entropy, Statistical Evidence, and Scientific Inference: Evidence Functions in Theory and Applications

Guest Editors: Brian Dennis, Mark L. Taper, Jose Miguel PoncianoDeadline: 31 May 2024

Special Issue in

Entropy

Nonlinear Dynamics in Cardiovascular Signals

Guest Editor: Claudia LermaDeadline: 15 June 2024

Topical Collections

Topical Collection in

Entropy

Algorithmic Information Dynamics: A Computational Approach to Causality from Cells to Networks

Collection Editors: Hector Zenil, Felipe Abrahão

Topical Collection in

Entropy

Wavelets, Fractals and Information Theory

Collection Editor: Carlo Cattani

Topical Collection in

Entropy

Entropy in Image Analysis

Collection Editor: Amelia Carolina Sparavigna